A Vision for Catgrad

I’ve just released version catgrad 0.2.0, a “categorical compiler for deep learning”. This blog post is a little overview of where catgrad is now, and where it’s going.

tl;dr: ultimately, I want catgrad to be a general purpose “differentiable categorical array programming language” usable for not only AI/ML, but also general purpose GPU programming. It should be easier to write simulation code that runs on the GPU and is directly rendered; imagine a voxel game with LLM agents whose inference is done in a compute shader!

What catgrad is now

Right now, catgrad is a deep learning framework (like pytorch and tinygrad) with a novel twist: models are compiled into their reverse passes. Here’s an excerpt from catgrad’s README explaining what that means.

Here is a linear model in catgrad:

model = layers.linear(BATCH_TYPE, INPUT_TYPE, OUTPUT_TYPE)catgrad can compile this model…

CompiledModel, _, _ = compile_model(model, layers.sgd(learning_rate), layers.mse)… into static code like this…

class CompiledModel:

backend: ArrayBackend

def predict(self, x1, x0):

x2 = x0 @ x1

return [x2]

def step(self, x0, x1, x9):

x4, x10 = (x0, x0)

x11, x12 = (x1, x1)

x16 = self.backend.constant(0.0001, Dtype.float32)

# ... snip ...

x18 = x17 * x5

x2 = x10 - x18

return [x2]… so you can train your model by just iterating step; no autograd needed:

for i in range(0, NUM_ITER):

p = step(p, x, y)The 0.2.0 release of catgrad includes just enough features to build a

GPT model,

but catgrad is still in the early stages.

The rest of this post explains a bit about how catgrad works, its philosophy,

and a vision of where it’s going.

A Differentiable Categorical Array Programming Language

Eventually, Catgrad will be a (1) categorical (2) differentiable (3) array programming language. What does this mean? I’ll address these three parts one-by-one.

Catgrad is Categorical

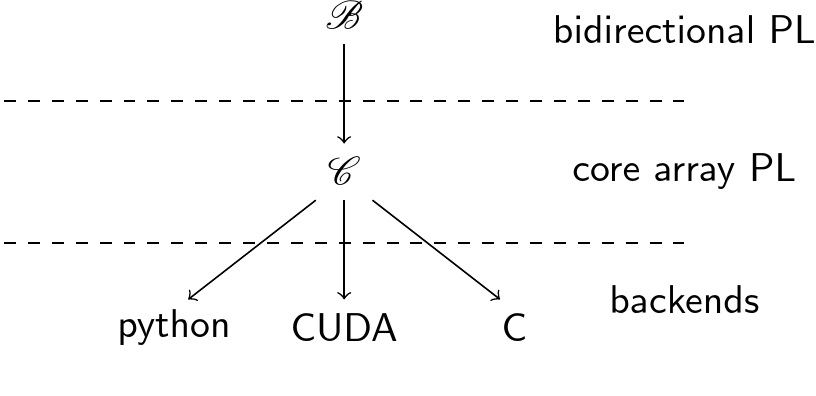

Internally, catgrad compiler works as a “stack” of categories with functors between them. Currently, that stack of categories looks like this:

A “program” in catgrad is a morphism in a symmetric monoidal category. Currently, you build that morphism by tensor and composition of primitives in the category \(\mathscr{B}\) of “bidirectional programs”. Compiling a program (morphism) means applying a functor to map it into catgrad’s “core array language” \(\mathscr{C}\), and then down into a specific backend.

The core array language in version 0.2.0 is quite primitive - it’s a bit like

a low-level, typed numpy.

The main missing features are general reduction operations and a way to write

custom kernels - I’m planning to fix this by adding another category to the

stack which looks a bit like triton.

Catgrad is Differentiable

I just told you that programs in catgrad are morphisms. More concretely, catgrad represents each morphism as an open hypergraph. (For the category theorists, this means that a program in each layer of the stack is a morphism in the free symmetric monoidal category presented by some generating objects and operations.)

We use this graph-based datastructure instead of an AST for the following reasons:

- It’s data-parallel & runs on the GPU.

- This means our compiler itself can be GPU-accelerated…

- … but even on CPU it scales to very large programs …

- … which we need to represent very large circuits for e.g., FPGA backends.

- It corresponds directly to mathematical definitions

- We can use the algorithm in Section 10 of this paper to differentiate programs.

The last of these reasons is (of course) most important for our initial use-case of machine learning. In a nutshell, this allows us to take a program \(p\) and produce a program \(R[p]\) which computes the gradients of \(p\). This matters because it means you can train a neural network without needing a framework at train-time. No more 5GB pytorch dependencies!

Catgrad is an array programming language:

This part’s aspirational.

I already told you that catgrad version 0.2.0 catgrad is like a low-level, typed

NumPy, but what’s it going to be like in the future?

In short: a general purpose array programming language which is useful not just

for AI / ML workloads, but also for general GPU / parallel programming.

Some applications which I’d like catgrad to eventually support are:

- Deep learning (obviously)

- Graphics programming (imagine writing your compute and render pipelines in the in 50 lines of code instead of 5000 + a bunch of glue in C++)

- DSP, FPGA, and embedded parallel programming

In general, the philosophy of catgrad is as follows:

- Compiler-as-library: Instead of building a monolithic compiler, a library of compiler passes should be made available to the user

- Foundations first: Design the language & compiler around sound mathematical foundations (i.e., category theory)

- Data-parallel compilation: compilers should be fast! We should be able to compile code on the GPU!

Hellas, Catgrad, and You

I’m currently working at hellas.ai, where catgrad and open-hypergraphs are a big part of our stack.1 We’ll soon be hiring, so if this sounds like something you’d want to work on, email me at paul@hellas.ai - start your subject line with “HIRING”.

The most important way catgrad helps us is in circuitizing the training code for Zero-Knowledge ML training.↩︎